本文基于Ubuntu 1804

本文组件版本 Hadoop 3.2.2 / Hive 3.1.2

目录

HDFS

1

2

3

4

5

| cd /opt/services

wget https://mirrors.tuna.tsinghua.edu.cn/apache/hadoop/common/hadoop-3.2.2/hadoop-3.2.2.tar.gz

tar xf hadoop-3.2.2.tar.gz && cd hadoop-3.2.2

|

1

| vim etc/hadoop/core-site.xml

|

1

2

3

4

5

6

| <configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

|

1

| vim etc/hadoop/hdfs-site.xml

|

1

2

3

4

5

6

| <configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>

|

1

| vim etc/hadoop/hadoop-env.sh

|

1

| export JAVA_HOME=/opt/services/jdk

|

1

2

3

4

| cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

ssh localhost

|

1

2

3

| bin/hdfs namenode -format

sbin/start-dfs.sh

|

1

2

3

| 28657 DataNode

28434 NameNode

28924 SecondaryNameNode

|

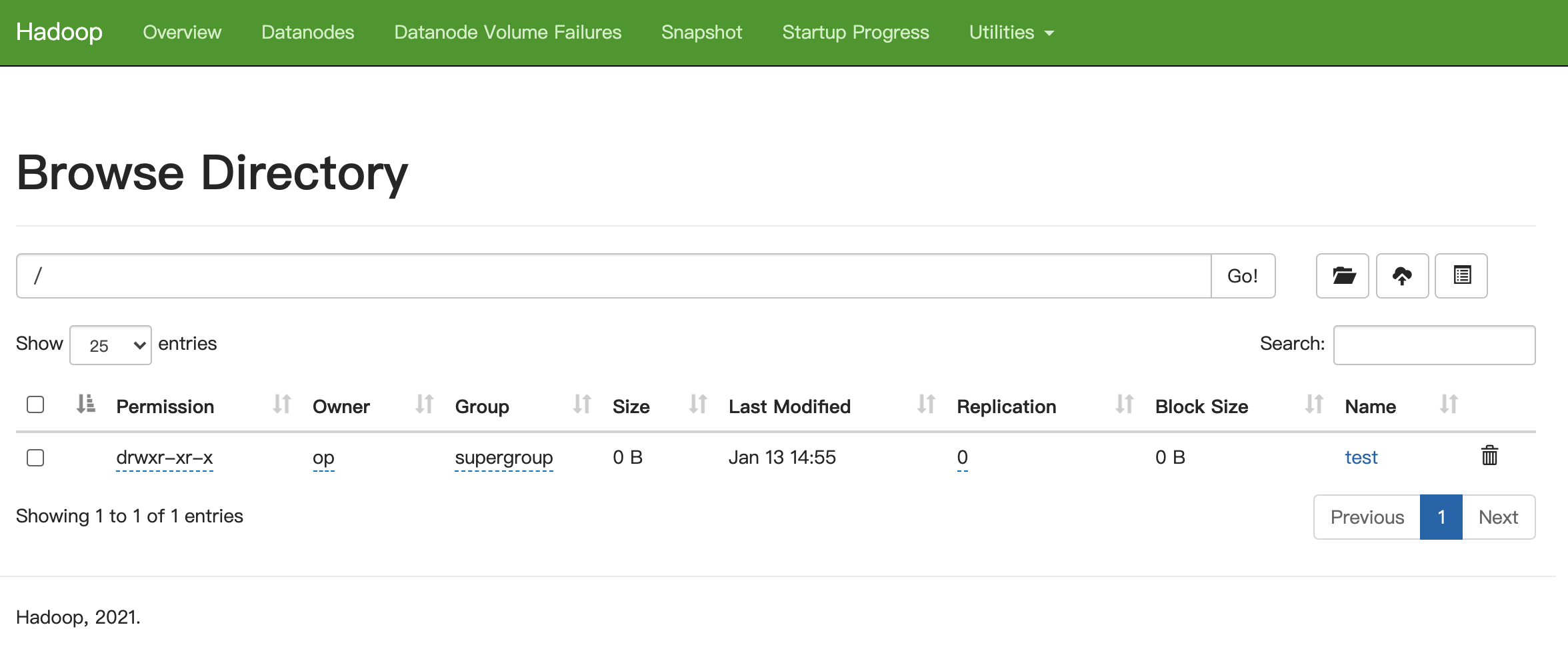

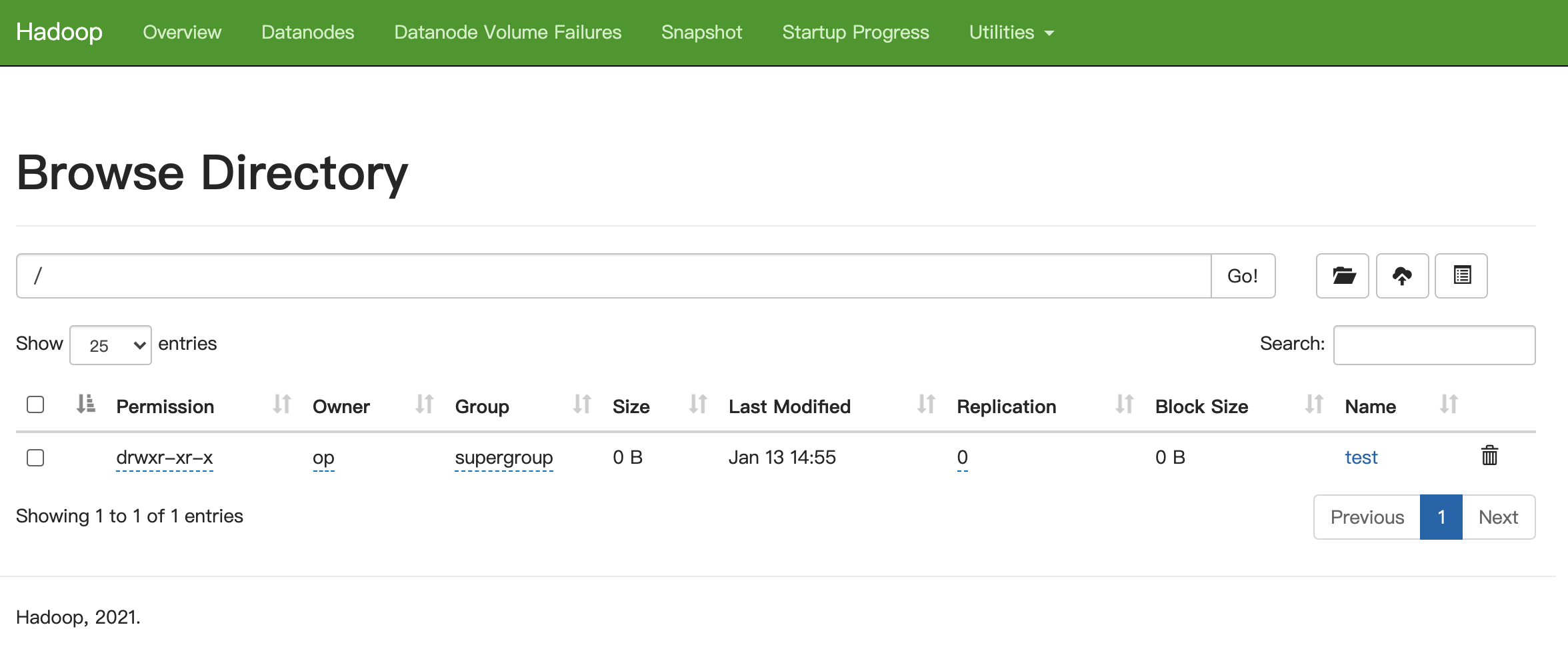

浏览器访问http://172.16.16.100:9870/explorer.html#/

1

2

3

| bin/hdfs dfs -mkdir /test

bin/hdfs dfs -ls /

|

1

2

| Found 1 items

drwxr-xr-x - op supergroup 0 2022-01-13 14:55 /test

|

YARN

1

| vim etc/hadoop/mapred-site.xml

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

| <configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.application.classpath</name>

<value>$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/*:$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/lib/*</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

</configuration>

|

1

| vim etc/hadoop/yarn-site.xml

|

1

2

3

4

5

6

| <configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

|

1

2

| 29459 ResourceManager

29659 NodeManager

|

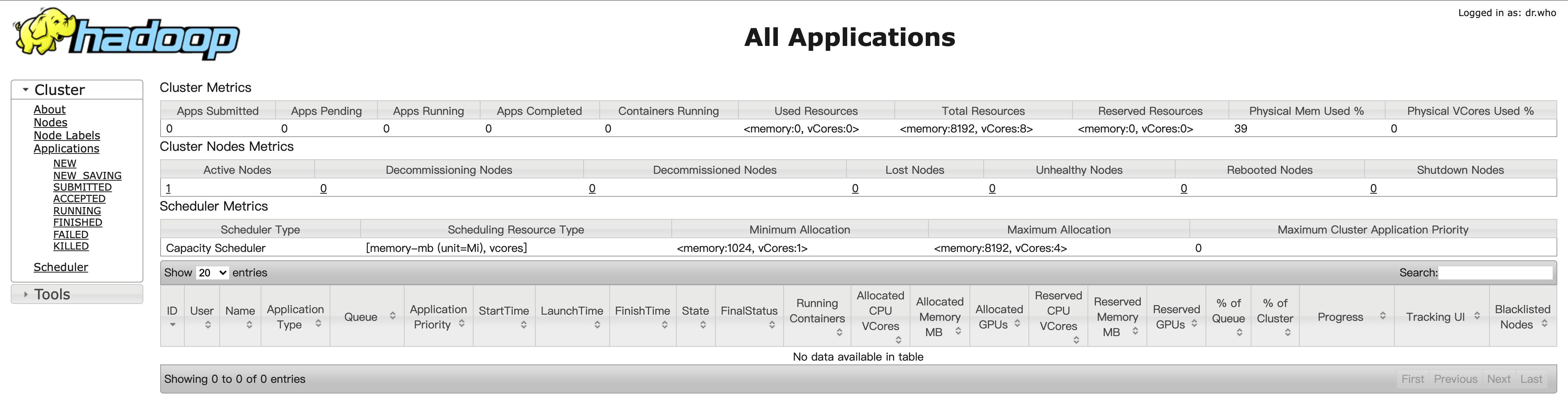

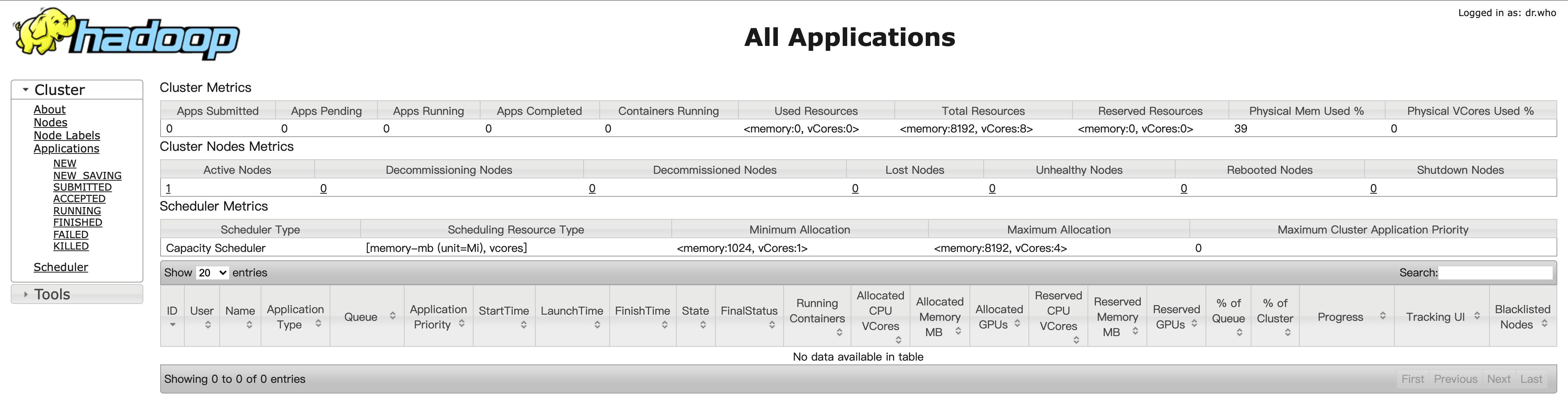

浏览器访问http://172.16.16.100:8088/cluster

1

2

3

4

5

6

7

8

9

10

11

12

13

| echo "hello" >> /tmp/test1.txt

echo "world" >> /tmp/test1.txt

echo "hello" >> /tmp/test2.txt

echo "hadoop" >> /tmp/test2.txt

bin/hadoop fs -mkdir /input

bin/hadoop fs -put /tmp/test*.txt /input

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.2.2.jar wordcount /input /output

bin/hadoop fs -cat /output/part-r-00000

|

1

2

3

| hadoop 1

hello 2

world 1

|

Hive

准备好MySQL服务 并新建数据库hive

1

2

3

4

5

| cd /opt/services

wget https://mirrors.tuna.tsinghua.edu.cn/apache/hive/hive-3.1.2/apache-hive-3.1.2-bin.tar.gz

tar xf apache-hive-3.1.2-bin.tar.gz && cd apache-hive-3.1.2-bin

|

1

2

| export HADOOP_HOME=/opt/services/hadoop-3.2.2

export HIVE_CONF_DIR=/opt/services/apache-hive-3.1.2-bin/conf

|

1

2

3

| mv /opt/services/apache-hive-3.1.2-bin/lib/guava-19.0.jar ~

cp /opt/services/hadoop-3.2.2/share/hadoop/common/lib/guava-27.0-jre.jar /opt/services/apache-hive-3.1.2-bin/lib/

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

| <configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://127.0.0.1:3306/hive?ssl=false</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>zhgmysql</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

</configuration>

|

1

| cp mysql-connector-java-8.0.27.jar /opt/services/apache-hive-3.1.2-bin/lib/

|

1

2

3

| bin/schematool -dbType mysql -initSchema

bin/hive

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

| show databases;

use default;

create table student(id int, name string);

show tables;

insert into student values(1, "xiaoming");

select * from student;

|

1

2

| /opt/services/hadoop-3.2.2/bin/hadoop fs -ls /user/hive/warehouse

|

参考